Computer processing of information has been used for decades, but the term "big data" - Big Data - had only become widespread by 2011. Big data has enabled companies to quickly extract business value from a wide variety of sources, including social networks, geolocation data transmitted by phones and other roaming devices, publicly available information from the Internet, and sensor readings embedded in cars, buildings and other objects.

Analysts use the 3V / VVV model to define the essence of big data. The designation is an acronym for the three key principles of Big Data: volume, velocity, and variety, respectively.

Big Data is arrays of diverse information that is often generated, updated and provided by multiple sources. This is used by modern companies to work more efficiently, create new products, and ultimately become more competitive. Big Data accumulates every second - even as you're reading this, someone is collecting information about your preferences and browsing activities. Most companies use Big Data to improve customer service, while others use it to improve operational data and predict risk.

For example, VISA uses Big Data to reduce fraudulent transactions, World of Tanks game developers use it to reduce gamer churn, the German Ministry of Labour uses it to analyse unemployment benefit applications, and major retailers compile large-scale marketing campaigns to sell as many products as possible.

It can be divided into the following stages:

An important element of working with Big Data is search, which allows you to get the information you need in different ways. In the simple case, it works in the same way as Google does. Data is available to internal and external parties for a fee or for free - it all depends on the terms of ownership. Big Data is in demand from app and service developers, trading companies and telecommunications companies. For business users, information is offered in a visualised, easy-to-understand form. If the format is text, it will be concise lists and excerpts, if it is graphical - diagrams, charts and animations.

Read also The Beginner's Guide to Web Hosting.

he handling of Big Data involves the use of a specific infrastructure focused on parallel processing and distributed storage of large volumes of data. But there is no one-size-fits-all solution for this purpose. Although a huge number of factors influence the choice of hardware, the only important factor is the software for Big Data collection and analysis. Accordingly, the process of purchasing hardware for a company will be as follows:

Thus, each project will be unique in its own way, and the equipment for its deployment will depend on the software chosen. Let's take for example two server solutions which are adapted to work with Big Data.

This is a powerful and flexibly scalable platform designed for rapid analysis of large data sets of different types. It combines the advantages of a pre-configured hardware platform running on industry-standard components with dedicated open source software. The latter is provided by Cloudera and Datameer. The manufacturer guarantees the compatibility of the system components and its efficiency for complex analysis of structured and unstructured data. PRIMEFLEX for Hadoop is offered out-of-the-box, complete with business consulting services for Big Data, integration and maintenance.

This integrated system makes the most of SAP HANA. FUJITSU's PRIMEFLEX is suitable for storing and processing large amounts of data in RAM in real time. Calculations are performed both locally and in the cloud.

FUJITSU delivers PRIMEFLEX for SAP HANA in a comprehensive manner, with value-added services for all phases - from project decision and financing to ongoing operations. The product is based on components and technologies that have been certified for SAP. It covers different architectures, including previously configured, scalable system support, customised and virtualised VMware platforms.

Waymo Data Scientist interview

04/04/2023

Waymo Data Scientist interview

04/04/2023

What is intelligent electronic device?

03/04/2023

What is intelligent electronic device?

03/04/2023

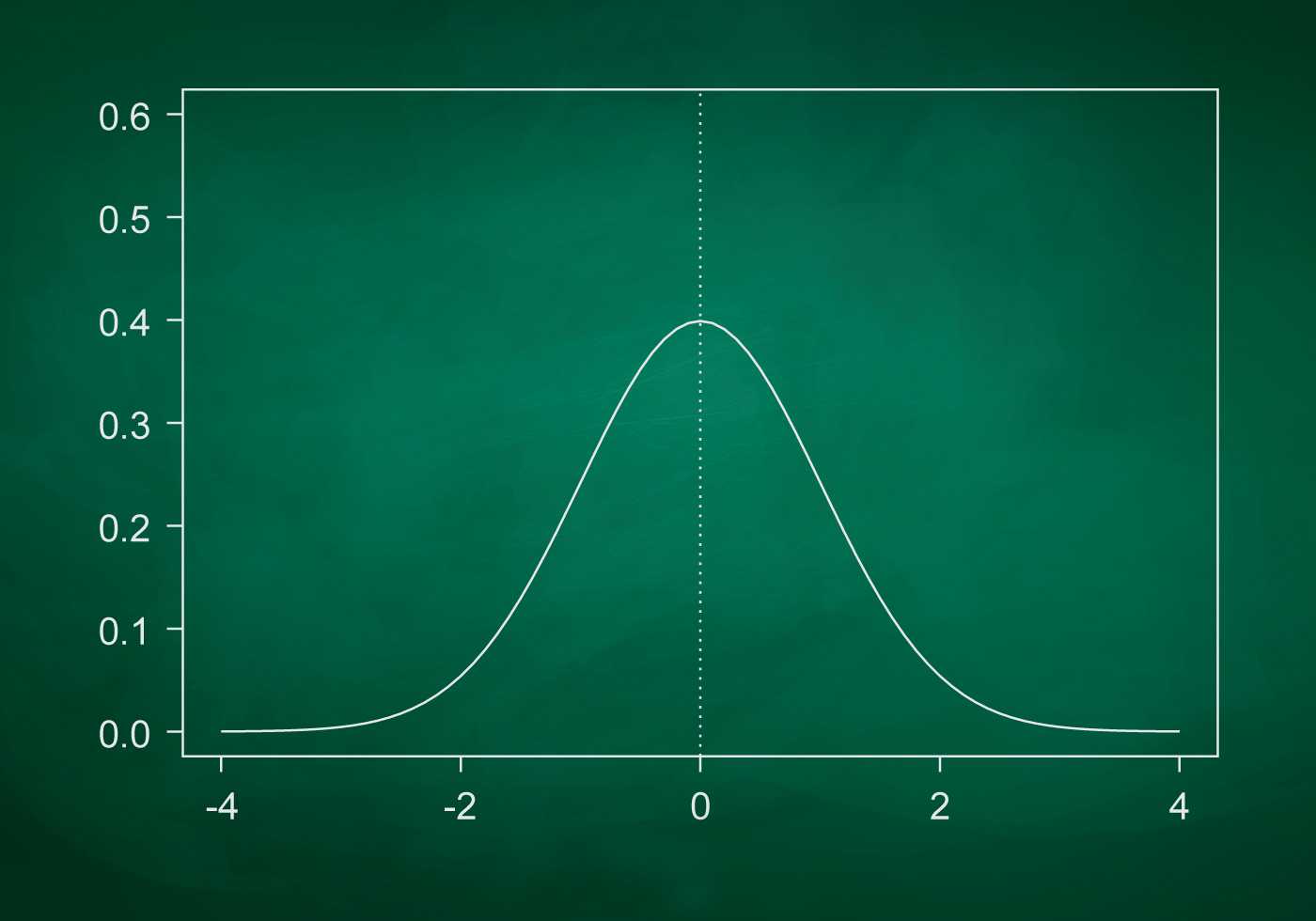

What is standard deviation definition

10/11/2022

What is standard deviation definition

10/11/2022

What is Power BI and how to use it

10/11/2022

What is Power BI and how to use it

10/11/2022

What is ITIL 4 Foundation

13/04/2022

What is ITIL 4 Foundation

13/04/2022

Best laptop for hacking

10/04/2022

Best laptop for hacking

10/04/2022

Best laptops for podcasting 2022

10/04/2022

Best laptops for podcasting 2022

10/04/2022